Refer to the official Kubernetes documentation for details on how to add taints and node labels. If you want to change node labels and taints after node registration you should use kubectl.

The two options only add labels and/or taints at registration time, and can only be added once and not removed after that through rke2 commands. RKE2 agents can be configured with the options node-label and node-taint which adds a label and taint to the kubelet. the public and private IPs of the nodes) are included in the NO_PROXY list, or that the nodes can be reached through the proxy. You should ensure that the IP address ranges used by the Kubernetes nodes themselves (i.e. RKE2 will automatically add the cluster internal Pod and Service IP ranges and cluster DNS domain to the list of NO_PROXY entries. These proxy settings will then be used in RKE2 and passed down to the embedded containerd and kubelet.Īdd the necessary HTTP_PROXY, HTTPS_PROXY and NO_PROXY variables to the environment file of your systemd service, usually: If you are running RKE2 in an environment, which only has external connectivity through an HTTP proxy, you can configure your proxy settings on the RKE2 systemd service. See this template for an example of how to use the structure to customize the configuration file. The will be treated as a Go template file, and the config.Node structure is being passed to the template. RKE2 will generate the config.toml for containerd in /var/lib/rancher/rke2/agent/etc/containerd/config.toml.įor advanced customization of this file you can create another file called in the same directory and it will be used instead.

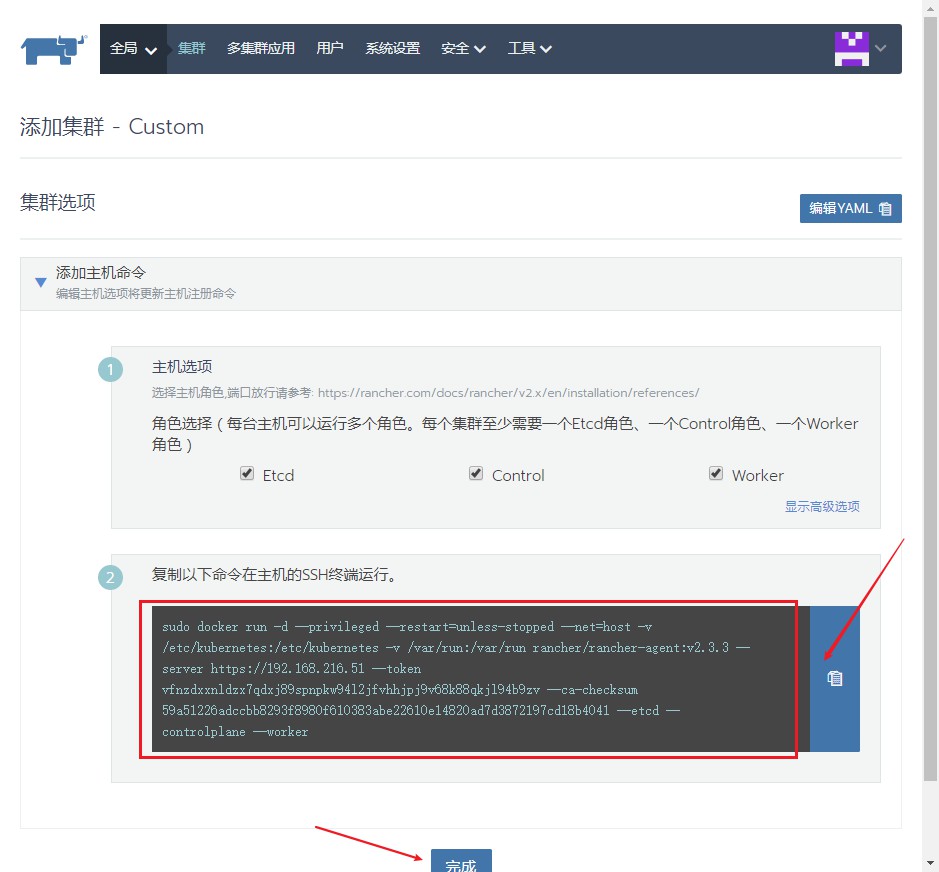

Auto-Deploying Manifests Īny file found in /var/lib/rancher/rke2/server/manifests will automatically be deployed to Kubernetes in a manner similar to kubectl apply.įor information about deploying Helm charts using the manifests directory, refer to the section about Helm. See Certificate Management for more details. I don’t know if the “could not get kubeconfig” is indicative of a problem or not.It is also possible to rotate an individual service by passing the -service flag, for example: rke2 certificate rotate -service api-server. … 0 19:24:22 Provisioning node eta2-k8s-m1 doneĠ 19:24:22 Generating and uploading node config eta2-k8s-m1Ġ 19:24:22 Handling backend connection request Ġ 19:24:22 could not get kubeconfig for cluster c-gpp9tĠ 19:24:22 Found as node name in cluster, error: On the rancher side logs I can see all the logs the provisioning On the Rancher UI cluster page it shows “Provisioning” and “Waiting for etcd, controlplane, and worker nodes to be registered” If I look at m-sb82n’s yaml in the rancher IU is has ntrolPanel:true and spec.etcd: true I don’t understand why the agent is logging Option etcd=false, and Option controlPlane=false, or if this is normal. Either cluster is not ready for registering, cluster is currently provisioning, or etcd, controlplane and worker node have to be registered" Time="" level=info msg="Waiting for node to register.

Time="" level=info msg="Connecting to proxy" url="wss://XXX.XXX.209.125/v3/connect" Time="" level=info msg="Connecting to wss://XXX.XXX.209.125/v3/connect with token starting with 2lxpm6568f4bmmjhc9nh5lf6svk" Time="" level=info msg="Option dockerInfo=" Time="" level=info msg="Option requestedHostname=m-sv82n" Time="" level=info msg="Option worker=false"

Time="" level=info msg="Option controlPlane=false" Time="" level=info msg="Option etcd=false" Time="" level=info msg="Option customConfig=map roles: taints:]" Time="" level=info msg="Rancher agent version v2.6.5 is starting" Time="" level=info msg="Listening on /tmp/log.sock" WARN: Loopback address found in /etc/nf, please refer to the documentation how to configure your cluster to resolve DNS properly INFO: Using nf: nameserver 127.0.0.53 options edns0 trust-ad search _REDACTED_ INFO: Environment: CATTLE_ADDRESS=XXX.XXX.210.7 CATTLE_AGENT_CONNECT=true CATTLE_INTERNAL_ADDRESS= CATTLE_NODE_NAME=m-sv82n CATTLE_SERVER= CATTLE_TOKEN=REDACTED The controlplane VM provisions fine, and it starts, but the rancher agent on the control plan node is logging INFO: Arguments: -server -token REDACTED -ca-checksum be3ae53c5b65e299b9b21ae7d757c97547d7357d438e585dfd426e2d493d3519 -r -n m-sv82n The control plane node is stuck “Waiting to register with Kubernetes”, this is the first control plane in the cluster Rancher version 2.6.5, k8s version v1.23.10-rancher1-1ĭeploying a cluster through Cluster Management, with the VmSphere provider, RKE1, I have node pools for worker and control plane nodes Looking for some guidance on diagnosing a control plane provisioning issue

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed